Artificial intelligence has become a present reality in various everyday tools, especially for Data Engineers. This article demonstrates how AI is transforming data exploration, querying, and management across three essential tools: SQL Server Management Studio (SSMS), Azure Data Factory, and Snowflake.

SQL Server Management Studio

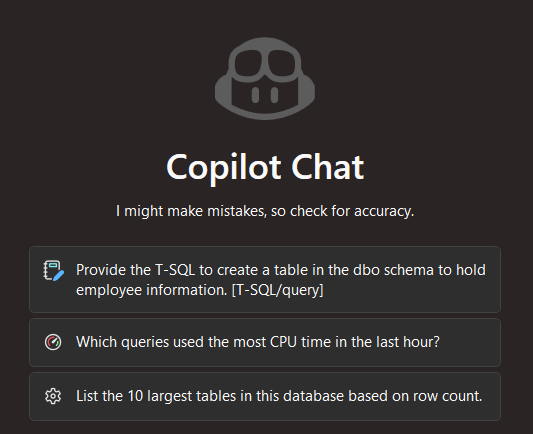

SQL Server Management Studio is one of the most widely used relational database management environments in a business context. It provides features to configure, monitor, and manage SQL Server instances and their respective databases. Starting from version 22, GitHub Copilot was added—a feature that helps write T-SQL more quickly and accurately with AI support. Copilot also answers general SQL questions and assists with administrative tasks directly within the SSMS environment.

What changes in practice?

The most immediate benefit is contextual SQL suggestions. Copilot recognizes the tables and columns of the connected schema and proposes completions while queries are being written, including relevant filters, possible groupings, and joins that match the data model. For those who write dozens of queries per day, the reduction in effort is considerable.

Copilot Chat also allows questions to be asked directly in the editor. It is possible to inquire about the queries with the highest CPU usage in the last hour, the largest tables by number of records, or how to simplify a specific stored procedure. The responses are contextualized based on what is open in the editor.

There is, however, a limitation to keep in mind: Copilot only accesses the schema and the code, not the data itself. Questions that rely on business context still require human intervention.

Azure Data Factory

Azure Data Factory (ADF) is Microsoft’s cloud-based data integration platform. It enables the construction and orchestration of pipelines that move, transform, and load information between systems, from relational databases to Data Lakes, and from external APIs to data warehouses.

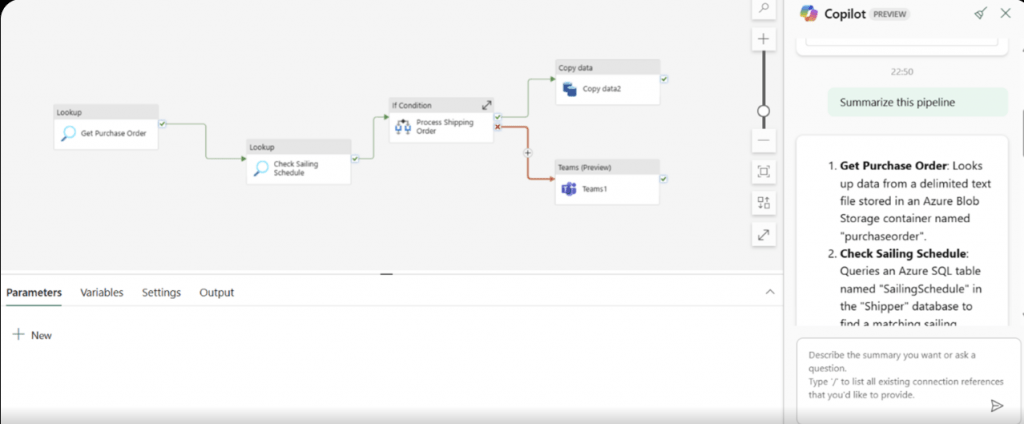

AI integration in ADF occurs through Copilot for Azure Data Factory, available directly in ADF Studio. Unlike Copilot in SSMS, which primarily assists with code writing, Copilot in ADF helps with the design, configuration, and diagnostics of data integration pipelines.

Pipeline Creation

Instead of manually configuring each activity, connector, and transformation, the user describes what they want: “copy data from a SQL Server database to Azure Data Lake Storage and filter records prior to 2020.” Copilot interprets the request and generates a complete pipeline proposal.

This is especially useful in teams with varying levels of experience. Those less familiar with the platform can produce an initial functional framework and then have it validated by someone more experienced, without having to start from scratch without any reference.

Monitoring and Diagnostics

When a pipeline fails, Copilot analyzes the error logs and provides an explanation of the issue, along with recommended steps for its resolution. This capability significantly reduces diagnostic time compared to manually reading technical logs, especially in teams with varying levels of experience.

Snowflake

Snowflake is a cloud data platform designed to store and analyze large volumes of information. What sets it apart from other platforms is the way it incorporates artificial intelligence as a native capability through Cortex.

Cortex in Snowflake

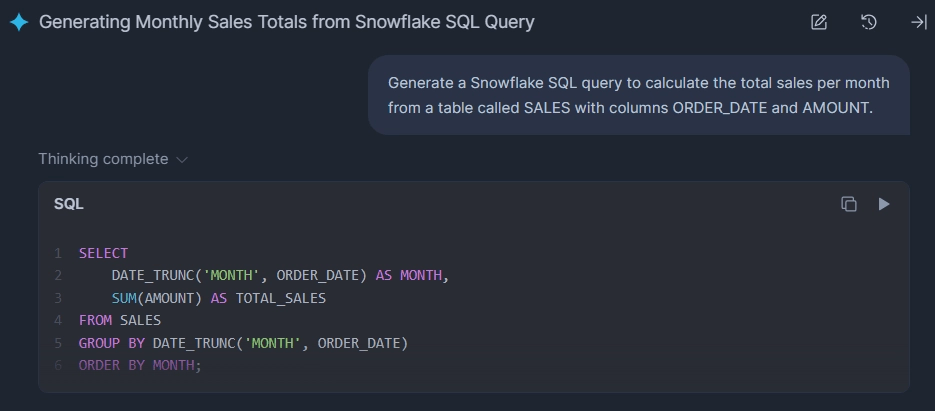

Cortex runs large language models directly on the data stored in Snowflake, without the need to export, move, or integrate it with external services. It provides native SQL functions that allow AI operations to be performed as if they were regular queries. Sentiment analysis, text classification, entity extraction, document summarization, and translation become accessible directly within a query, with no additional configuration.

Cortex Analyst

Cortex Analyst targets a different user profile. While Cortex is a tool used by technical users to programmatically enrich data, Analyst is designed for those who need answers but are not proficient in SQL.

In practice, a technical user or analyst defines a semantic model over the tables, creating an interpretive layer that maps business concepts to technical structures. From there, any user can query the data in natural language and obtain reliable answers, as the model controls the scope of interpretation.

What makes all of this possible?

The separation of storage and compute makes it possible to combine these capabilities. Virtual warehouses scale independently, allowing different tasks to be executed in parallel.

This way, Cortex can run AI models while a heavy analytical query runs on the same data, without any interference between the two. In a traditional architecture, this would typically require data replication or separate execution windows.

AI in Data Engineering: Accelerator, Not a Replacement

The adoption of AI in Data Engineering tools is not a silent revolution; it is a visible change in the pace and quality of daily work.

Copilot in SSMS reduces the time spent writing and validating queries, ADF Copilot eliminates part of the diagnostic effort, and Snowflake’s Cortex brings AI capabilities directly into the data pipeline, without the need for additional infrastructure.

What all these integrations have in common is their positioning: AI functions as a support layer, not a replacement for the engineer. Judgment regarding the data model, business criteria, and the quality of results remains human. What changes is that this judgment can now be exercised with less friction and more information available.

At B2F, we work daily with these tools in real-world contexts. If you want to understand how they can be applied to your specific situation, talk to us.